When companies talk about adopting AI, the conversation usually starts in a familiar place: identifying a workflow that could be improved.

Customer support. Reporting. Compliance. Internal research.

The instinct is to build—or buy—a tool that solves that specific problem. Something concrete, owned, and controlled. It fits neatly into how organisations already think about software: define the use case, ship a solution, roll it out.

But that’s only part of the story.

At the same time, something quieter is happening across teams. People are finding their own ways to use AI. They’re stitching together prompts, small automations, bits of logic. Not in a formal, sanctioned way—just whatever helps them get their work done faster.

These two approaches—top-down tools and bottom-up workflows—aren’t just different strategies. They’re based on very different assumptions about how work should happen.

The top-down model is easy to understand because it mirrors how enterprise software has always worked. You identify a need, invest in a solution, and try to standardise behaviour across the organisation. There’s comfort in that. It creates a sense of control. Outputs are consistent, processes are visible, and responsibility feels contained within the system itself.

But that control comes at a price. These systems are expensive to build, slow to adapt, and often based on a snapshot of how a workflow looked at a particular moment in time. By the time they’re deployed, the reality on the ground has usually shifted. Work rarely stays still long enough to be neatly captured in a single product.

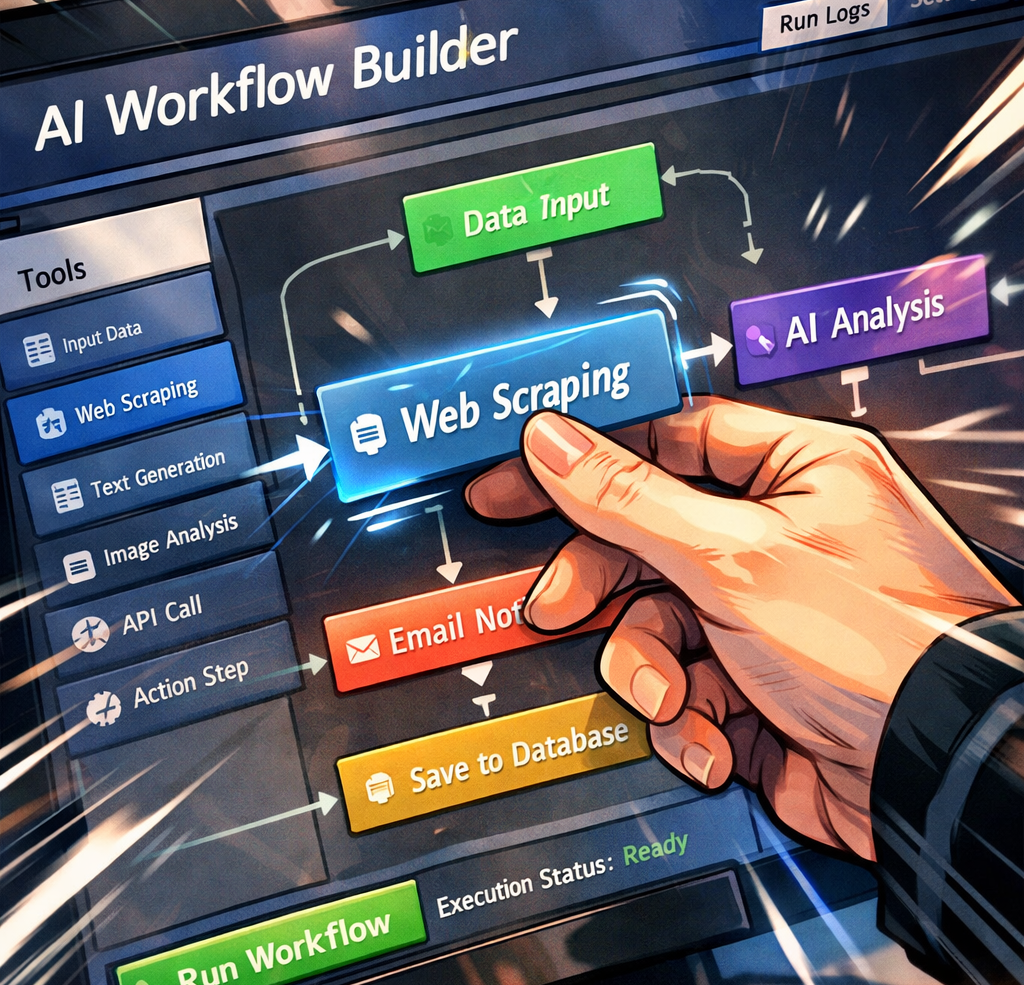

The alternative looks messier. Instead of building one tool per workflow, you give people the ingredients—access to models, simple ways to chain steps together, the ability to automate parts of their work—and let them figure out what’s useful. Examples I’ve seen are startups giving everyone access to n8n (a workflow builder with AI/agent capabilities). Or alternatives would be OpenAI’s Agent Builder. For larger organisations who are already using Microsoft products, there is Agent Builder in Microsoft 365 Copilot.

This spreads quickly because it maps more closely to how work actually happens. People tweak things. They experiment. They build small, imperfect solutions that evolve over time. The cost is lower, and the pace is much faster.

But it also introduces discomfort. Not everyone feels confident building something they’re expected to rely on. There’s less consistency, and from a management perspective, less visibility into what’s actually running.

At first glance, this looks like a straightforward trade-off between control and flexibility. But that framing misses something more important.

What really changes between the approaches is where responsibility sits.

In a centralised system, responsibility tends to drift towards the tool. If something goes wrong, it’s easy to point at the system and say, “that’s what it produced.” The logic is abstracted away. Most users don’t fully understand how decisions are being made, and they’re not expected to.

When people build their own workflows, that dynamic shifts. They know what they put together. They chose the prompts, the steps, the structure. If the output is wrong, it’s harder to outsource responsibility, but the fix can be near instant. The system isn’t something separate—it’s an extension of how they chose to work.

That’s a fundamentally different posture, and it’s one many organisations aren’t used to encouraging.

This is also why centralised approaches could fail in quieter ways than expected. On paper, they offer governance and standardisation. In practice, if they’re too rigid or slow, people route around them. They open a new tab, try something themselves, and keep going. What emerges is a layer of unofficial workflows that no one has visibility into, even though they’re where a lot of the real productivity gains are happening.

It’s not that control disappears. It just becomes harder to see.

On the other side, the idea that users aren’t “technical enough” to build their own workflows is becoming less true by the month. The tools are improving quickly. The real barrier isn’t capability so much as confidence. People are wary of being accountable for something they don’t fully trust yet, especially when the consequences of being wrong are unclear. These “Agent Builders” come with an agent built-in to help you build your own agents…!

The organisations that navigate this well will probably land somewhere in between. Not by trying to fully control how AI is used, but by shaping the environment in which it’s used. Providing shared infrastructure, visibility, and guardrails—while still letting people build and adapt their own workflows on top.

This third way emerging – an off-the-shelf builder, designed for a specific domain (e.g. finance), hosted by the organisation (for security, governance) where users can build, manage and share workflows in a faster and coordinated way. An example I’ve seen is Model ML – a platform for finance companies to speed up the safe roll out of AI.

That’s a different kind of control. Less about enforcing a single way of doing things, more about making sure whatever emerges is understandable and accountable.

Which brings things back to a slightly uncomfortable question.

When an AI system produces the wrong output, who owns that outcome?

For all the discussion about models, tools, and cost, that question tends to sit in the background. But it’s the one that ultimately determines how far any organisation is willing to go.

Because adopting AI isn’t just about introducing new capabilities. It’s about deciding how responsibility is distributed once those capabilities are in place.