For the last few years, AI product design has largely revolved around one thing: the chat interface.

Prompt in, response out.

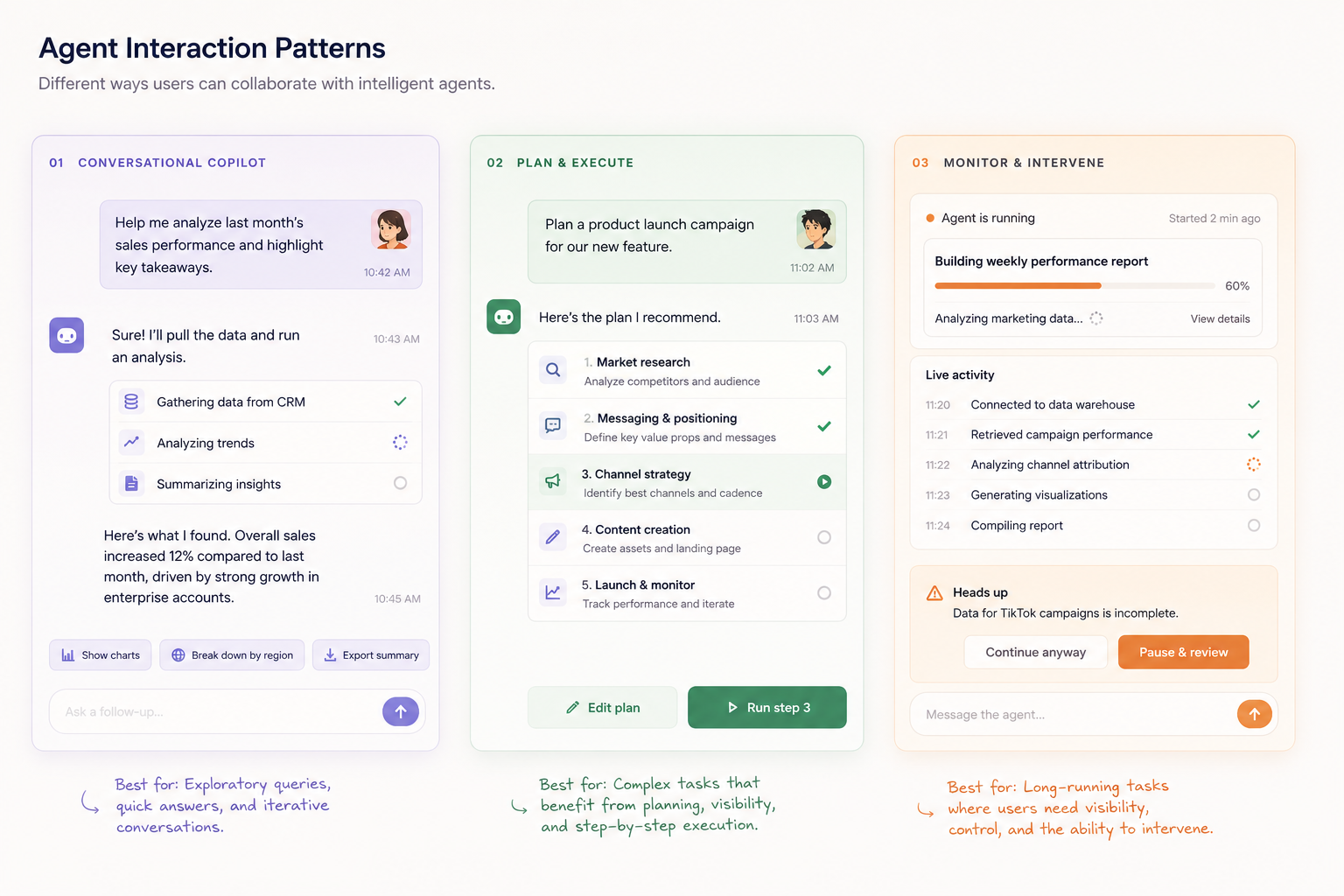

But the next wave of AI products is shifting away from “chatbots” toward agentic interfaces — systems that can reason, take action, collaborate with tools, maintain context, and work toward goals over time.

The design challenge is changing too.

We’re no longer just designing prompts and response states. We’re designing:

- autonomy

- visibility

- trust

- orchestration

- interruption

- collaboration between humans and agents

A good agentic interface doesn’t feel like typing into a magic box. It feels like supervising a capable system.

3 Design Principles for Agentic Interfaces

1. Show intent, not just output

Traditional interfaces optimise for results.

Agentic systems need to expose reasoning and direction:

- what the agent is currently doing

- why it chose that action

- what tools it’s using

- what it plans to do next

Users build trust through visibility.

This is why activity feeds, execution graphs, timelines, and step-based workflows are becoming common patterns in AI-native products.

Interfaces should answer:

“What is the system trying to achieve right now?”

2. Design for interruption and steering

Agents shouldn’t operate like black boxes running endlessly in the background.

Good agent UX allows users to:

- pause execution

- redirect goals

- edit plans mid-flight

- approve sensitive actions

- intervene when confidence is low

The interaction model becomes closer to collaboration than command execution.

The best agentic products will likely feel less like using software and more like directing a team member.

3. Persistent context is part of the interface

In chat interfaces, memory is often hidden.

In agentic systems, context becomes a core UI primitive.

Users need visibility into:

- what the agent remembers

- active goals

- available tools

- current tasks

- previous decisions

- long-running workflows

This means the interface expands beyond a single conversation thread into dashboards, workspaces, task graphs, and shared state systems.

The UI is no longer just a “frontend for prompts.”

It becomes an operational layer for human-agent collaboration.

Libraries and Frameworks Worth Exploring

A few projects are starting to define the interaction layer for agentic products:

- AG-UI — an open protocol and component system for building agent-user interfaces

- Vercel AI SDK / Chat SDK — production-ready primitives for streaming AI interfaces and multi-model interactions

- CopilotKit — React components and infrastructure for in-app AI copilots and agent experiences

The interesting shift is that these libraries are helping define a new interface paradigm altogether.